Rocky road towards ultimate UDP server with BPF based load balancing on Linux: part 2

In first part of this article we evaluated multiple possible options to load balance UDP traffic between multiple sockets using SO_REUSEPORT capability.

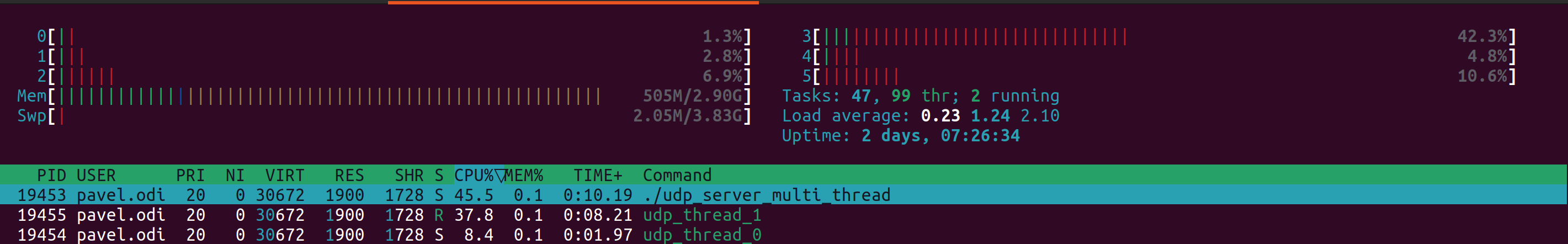

Due to nature of traffic in my particular case (majority of traffic is coming from single device) standard Linux kernel load balancing algorithm did not distribute it well and we were limited in options to use more threads to process UDP traffic. For tests in this article I used Ubuntu 22.04 with Linux kernel 6.2.0-26-generic.

To achieve even traffic distribution between sockets / threads we will use classic BPF microcode loaded into Linux Kernel which will be used only for sole purpose to find what is the best thread ID for particular UDP packet. From this very simple microcode we will have access to UDP packet itself and multiple network stack specific variables (you can find full list here in section Possible BPF extensions are shown in the following table) such as:

- len skb->len

- proto skb->protocol

- type skb->pkt_type

- poff Payload start offset

- ifidx skb->dev->ifindex

- mark skb->mark

- queue skb->queue_mapping

- hatype skb->dev->type

- rxhash skb->hash

- cpu raw_smp_processor_id()

- vlan_tci skb_vlan_tag_get(skb)

- vlan_avail skb_vlan_tag_present(skb)

- vlan_tpid skb->vlan_proto

- rand prandom_u32()

I can recommend doing detailed evaluation of protocol you work with to find out the best approach to distribute traffic. In my case we decided to do random distribution over all available threads using rand extension.

In addition to classic BPF microcode which can be loaded using SO_ATTACH_REUSEPORT_CBPF Linux kernel supports SO_ATTACH_REUSEPORT_EBPF which uses significantly more advance eBPF. My particular case is very simple and I do not need all advanced tricks available in eBPF and I prefer to keep things simple. If you've reached limitations of classic BPF then eBPF may be your choice.

What is the best way to write BPF microcode? You may manually write it in opcodes as documented in documentation from FreeBSD. Don't worry BPF is almost same on Linux and FreeBSD.

I found some examples in different guides and was able to create my microcode in opcodes:

struct sock_filter bpf_random_load_distribution[3] = {

/* Load random to A */

{ BPF_LD | BPF_W | BPF_ABS, 0, 0, 0xfffff038 },

/* A = A % mod */

{ BPF_ALU | BPF_MOD, 0, 0, threads_per_port },

/* return A */

{ BPF_RET | BPF_A, 0, 0, 0 },

};

Step by step logic is following:

- We generate random 32 bit integer accessible via address 0xfffff038

- Then load it to accumulator A.

- Then we use modulo operation and divide A by number of threads we have in place.

- After that we load result from modulo division to accumulator A.

- As last step we return value of accumulator A to Linux Kernel.

You may just copy it as is but if you want to implement alternative logic it may get exceptionally tricky to do so in opcodes.

What is the alternative option? We can write it in BPF assembler. In this case my microcode will have following look:

; Load random 32 bit value to A

ld rand

; Mod divide register A by number of worker threads

mod #4

ret aIf you had experience with assembler previously you may find it way easier to understand.

To compile this code into sock_filter format we can use Python module bpf-asm. It can be installed that way:

sudo pip3 install bpf-asmAfter that we can compile assembler into C structure compatible with BPF:

pybpf_asm reuseport_thread_distribution_bpf_random.txt -cIn my case I got following output:

{ 0x20, 0, 0, 0xfffff038 },

{ 0x94, 0, 0, 0x00000004 },

{ 0x16, 0, 0, 0x00000000 },It looks different from my hand written opcodes but it's complete equivalent to it:

{ BPF_LD | BPF_W | BPF_ABS, 0, 0, 0xfffff038 },

{ BPF_ALU | BPF_MOD, 0, 0, threads_per_port },

{ BPF_RET | BPF_A, 0, 0, 0 }The only difference that manually written code is easier to understand as we used symbolical constants. If you do not like Python based bpf-asm for some reasons you may use C based bpf_asm tool in Linux kernel sources and install it that way:

wget https://cdn.kernel.org/pub/linux/kernel/v6.x/linux-6.4.12.tar.xz

tar -xf linux-6.4.12.tar.xz

cd linux-6.4.12/tools/bpf

sudo apt install -y binutils-dev libreadline-dev bison flex

make

sudo cp bpf_asm /optAnd compile command is similar:

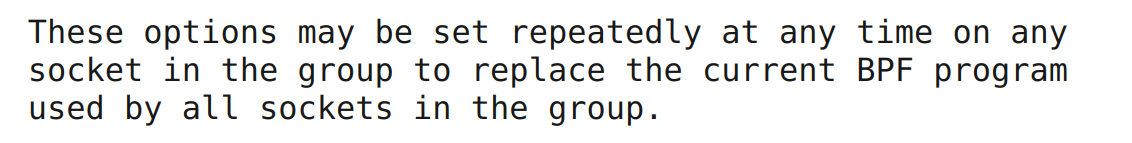

/opt/bpf_asm -c reuseport_thread_distribution_bpf_random.txtThe last remaining step is to load our microcode into Linux kernel for reuse_port load balancing. We will start from modifying our multi thread UDP server. In official man pages from Linux we have pretty relaxed description about ways to load it:

I did my best in reading documentation and I've added logic to load BPF based microcode to our previous version of UDP server with reuse_port based load balancing. You can find complete source code here. I decided to load it after we set flag SO_REUSEPORT and before actual bind(). And it did not work:

We will listen on :::2055 udp port

Setting reuse port

Loading BPF to implement random UDP traffic distribution over available threads

Successfully loaded reuse port BPF

Successful bind

We will listen on :::2055 udp port

Setting reuse port

Loading BPF to implement random UDP traffic distribution over available threads

Successfully loaded reuse port BPF

Can't bind on port: 2055 on host :: errno:98 error: Address already in use

Cannot create / bind socket

Started captureI did my best to read all the documentation again and again. I shared my broken code with community and asked for feedback. In same time I started looking for examples of successful use of SO_ATTACH_REUSEPORT_CBPF on GitHub and I found just few PRs and not so much about source code.

As for all relatively new features we may expect examples in Linux Kernel source code. I was lucky and I found reuseport_bpf.c in folder tools/testing/selftests/net of latest Linux kernel (6.4.12) which included complete test kit for SO_ATTACH_REUSEPORT_CBPF. After half hour of code removal I was able to make it fail in a similar way as my code. You can find complete source code of test here.

./reuseport_bpf

---- IPv4 UDP ----

Testing CBPF mod 10...

Testing CBPF mod 20...

./reuseport_bpf: failed to bind recv socket 0: Address already in useAt this moment I was pretty sure that this logic actually works as original Linux Kernel test suite does not fail but something was different from my implementation. After careful review of removed source code I found one place where another flag SO_REUSEADDR was set in addition to SO_REUSEPORT. I decided to try this idea and added SO_REUSEADDR right after SO_REUSEPORT call. You can find complete source code here. Success. It did not fail.

Can we be sure that it works? SO_REUSEADDR is clearly has nothing to do with load balancing and fixed our issue just by lucky accident. I did expect that it may break something and I have to repeat production tests to confirm that balancing works as expected.

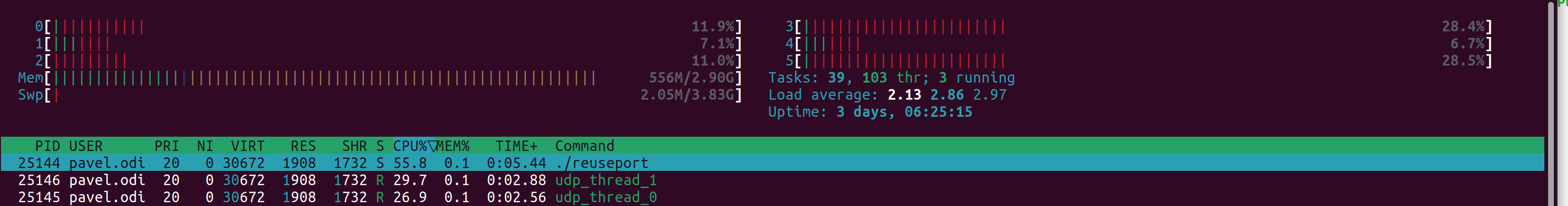

Luckily for us everything worked just fine:

Thread ID UDP packet / second

Thread 0 41263

Thread 1 41224

Thread 0 41374

Thread 1 40995

Thread 0 41260

Thread 1 41265And CPU was distributed evenly

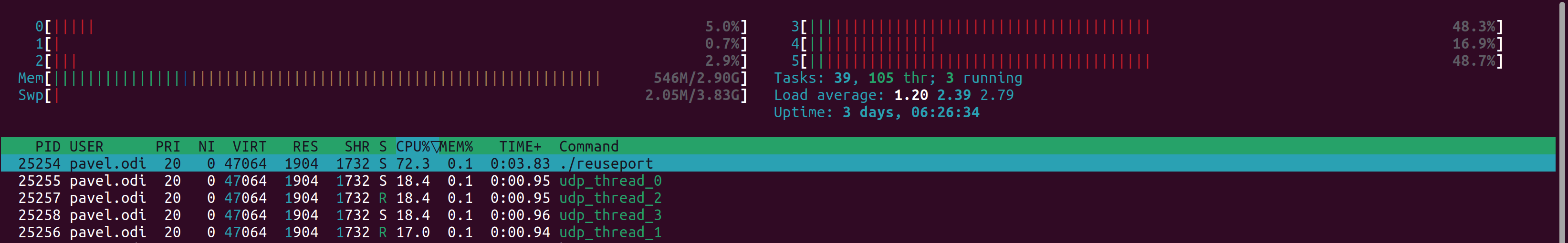

Just out of curiosity I increased number of worker threads to 4 and got same perfect results as with 2:

It may be time to declare success but we have to keep in mind that SO_REUSEADDR has pretty nasty side effects and can be clearly considered as insecure. It allows other apps on same machine to listen on this port.

I explained my original issue to Vadim Fedorenko from Meta and after checking source code of Linux kernel he shared with me that after we assign BPF program to socket then we will not able to find more sockets to port reuse_group. Then I got an idea to get rid of SO_REUSEADDR workaround by doing small structural change of my logic.

I moved logic to assign BPF for socket after we created all sockets for reuse port group and called bind() for each of them. At this moment we will not need to add more sockets and we can just assign BPF microcode for socket. You can find complete source code here.

I run production tests to confirm that everything worked just fine without any need of SO_REUSEADDR workaround.

Thread ID UDP packet / second

Thread 0 44711

Thread 1 44368

Thread 0 45027

Thread 1 45253It was clearly rocky road but I'm very happy to make this code work. It opens lots of opportunities to make UDP servers more efficient and increase their throughput. I hope these tricks will be addressed in future versions of Linux kernel. Meanwhile you can use my example as reference as it will work for all current versions of Linux Kernel without any issues.