High speed network traffic processing on Linux: part 1

Setting expectations

After reading this article you will have high level understanding of best approaches for developing high performance user space Linux applications which work with live network traffic.

Keywords

We will talk a lot about following technologies (in historical order as author worked with them): pcap, PF_RING, Netmap, AF_PACKET, DPDK, AF_XDP, XDP. This article will be interested to people working with HFT and focused on low-latency.

Who am I?

My name is Pavel Odintsov and I'm original author and maintainer of open source DDoS detection and mitigation tool FastNetMon Community (C++) and CTO of FastNetMon LTD, 🇬🇧, London. I've been working with live networks traffic from perspective of software engineer and architect since 2012 and you may find my most famous article about this topic here.

My background

I keep master's degree in software engineering but I never got any formal education about networks. I started learning this topic on my own during early stages of my career as part of Facebook interview preparation (SRE then Production Engineer) and right after then as part of FastNetMon development.

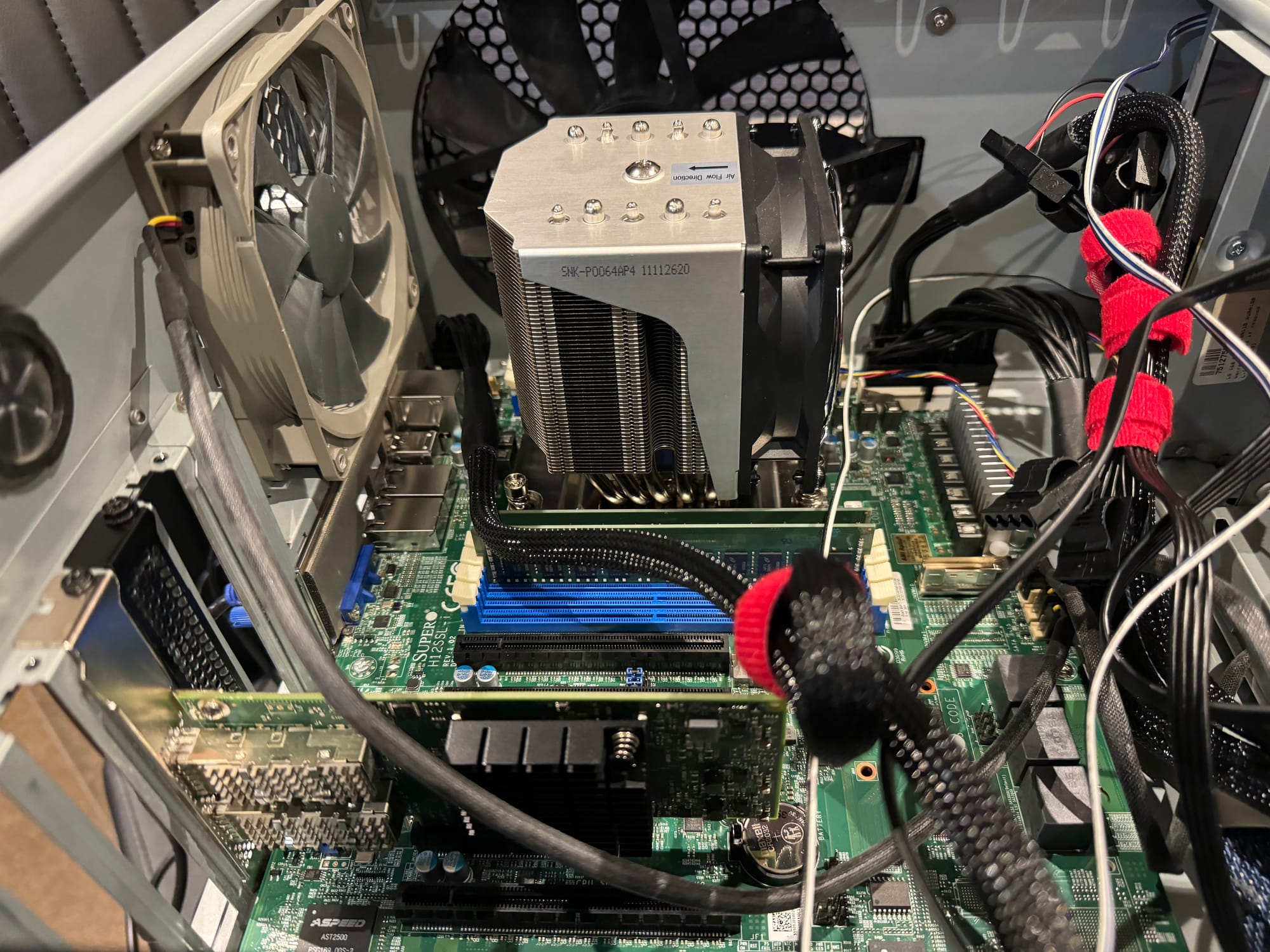

At the time when I started learning networks I had very solid experience with Linux internals from perspective of system administrator (namespaces, cgroups, storage, memory management, process management, NUMA, LVM, MD, KVM, OpenVZ, LXC) and had thousands of machines under my supervision as part of CTO role at cloud compute company.

During early steps of my professional career I did my best to avoid touching networks as this topic did not fit well in my software engineering brain. Then I got lucky and Facebook tried to hire me for their system operations team.

Facebook HR explicitly mentioned that I'll have separate interview about computer networks and I started my journey from Volume 1 and 2 of TCP/IP Illustrated

After finishing with both books I started polishing my skill in networks by reading source code, writing source code, talking with people, watching presentations and doing my experiments as part of FastNetMon development or for fun.

What is network traffic?

In this article we will assume that network traffic is all bytes and packets transmitted and received over media (copper, wireless, optics or other). For purpose of this article we completely exclude topics which involve high level protocols such as DNS, HTTP, QUIC or other application level protocols (usually called L7).

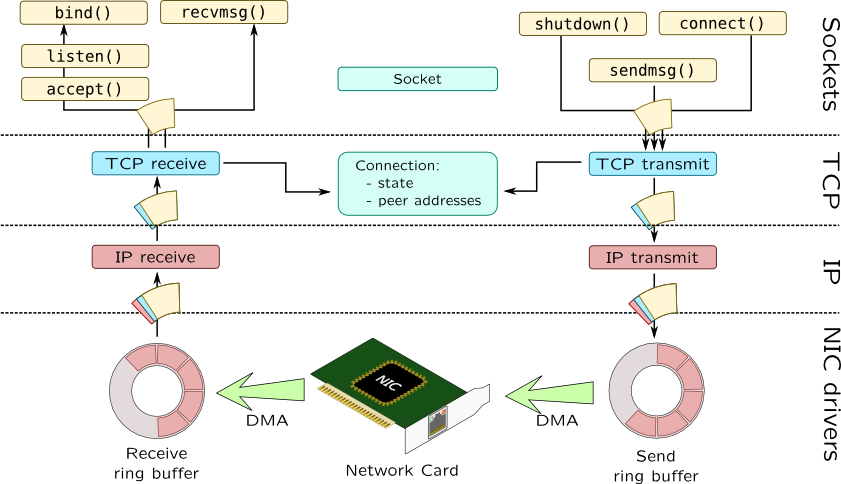

We will focus only on layers 3 and 4 of OSI model as regular Telecom equipment (routers, switches) does. On this diagram kindly provided by Sergey Klyaus we will cover only two bottom levels: IP and NIC drivers.

What is traffic processing?

There are two main tasks which we will consider as traffic processing:

- Traffic capture. When we receive traffic for our own needs from network.

- Traffic generation. When we send traffic to network.

By combining these two tasks we can add third task we will cover too:

- Traffic forwarding / routing. When we receive and send traffic in same time

Most of my own expertise lies in field of traffic capture and I'll focus on it more. It does not mean that technologies I cover will not apply for traffic generation. Majority of technologies will support both RX and TX very well as both of them are must for mature traffic processing technology.

What is the special about traffic processing?

Even experienced software engineers may ask what is the special about network traffic and why we cannot write programs for it as we used to for user space applications like HTTP or DNS servers.

Usually we measure performance of application level protocols in queries per second. For both HTTP and DNS ans we can use QPS as measurement of performance. We can consider any application level program as high loaded when QPS exceeds tens of thousands queries per second.

Of course you may hear some fancy engineering stories from big tech as some of their apps can do millions queries per second. Truly impressive but these are clear outliers and pretty rare to see in the wild.

Modern networks are lightning fast

Network traffic is special because of mind blowing amount of transferred data and packet rates.

You may have used to measuring performance of network interfaces in bandwidth but it's not the most important metric we have. Another way to measure performance of network interface is packet rate. From perspective of software engineering this metric is even more important then bandwidth as it's very close to QPS in it's nature.

You're way more likely to face issues with operating your application under high packet rate then with high bandwidth.

What is packet rate? It's a number of packets we can transfer for 1 second. Well, then to calculate this value from well known bandwidth we need to known packet size and that's where things get complicated.

In the wilderness of the Internet you're very unlikely to see packets which smaller then 64 bytes (TCP SYN with no options) or larger then 1500 bytes but in local networks you may see packets up to 9000 bytes or even more.

Assuming that we deal with corner case (don't think that this case is rare, large portion of DDoS attacks are implemented that way) and all our packets are 64 bytes long we will have 14.8 millions packets per second for 10G link.

Isn't it a lot?

As by 2024 network industry is heading towards slow deprecation of 10G interfaces and transition of them towards 25G and 100G for backbone.

So we need to deal with ~37 Mpps for 25G and 148 Mpps for 100G accordingly. These values usually referenced as line rate.

That's exact reason why traffic processing is tricky. If these numbers did not blow your mind I would like to put them into perspective of latency.

Latency is king

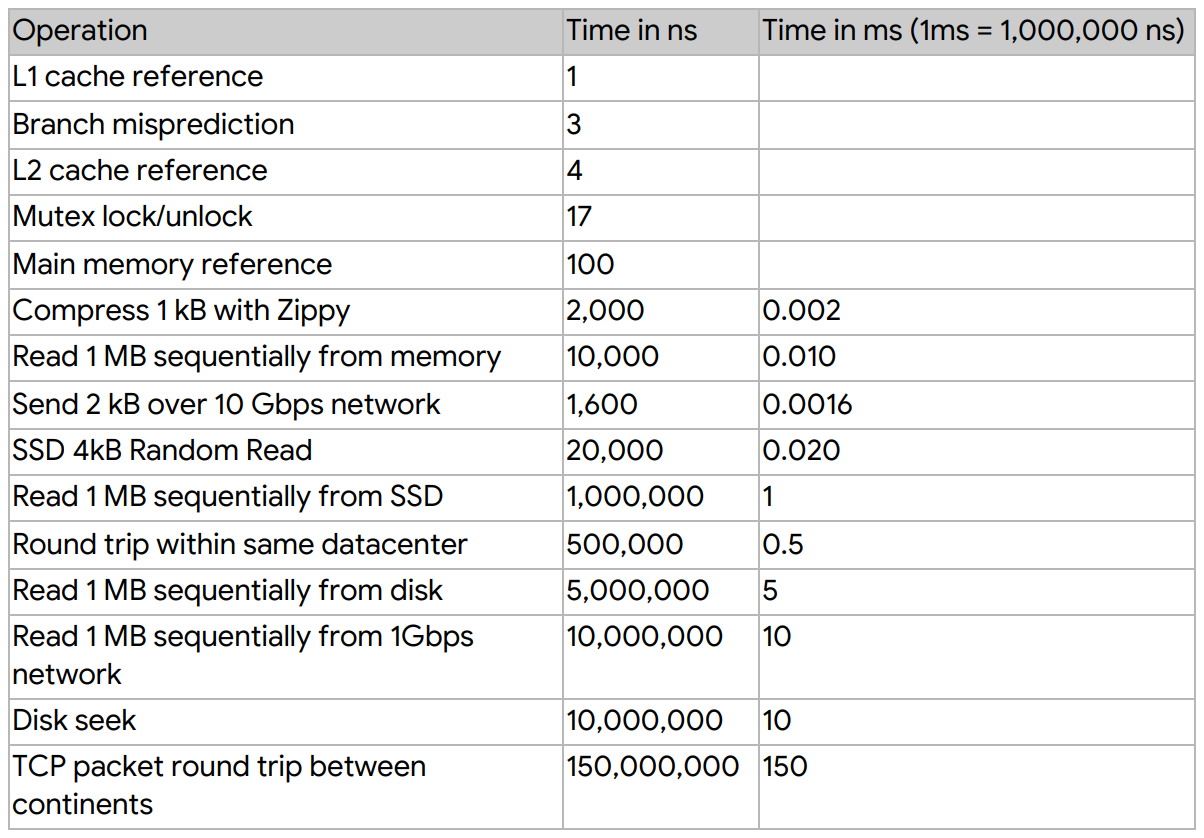

Let's divide 1 second by 148 millions of operations and we will have ~7 nanoseconds per each packet. If it still does not scare you then I would like to remind some numbers every single software developer must know if they want to work with traffic from Google SRE handbook:

L2 cache lookup is one of the fastest operations modern CPUs can do and it's slightly less then time required to process 1 packet on 100G network interface which works on line rate.

As you may see even fastest multi channel DDR5 memory will be very likely slower then time required for processing of 1 packet.

I provided all these numbers just to highlight that when we work with traffic we will fight for each nanosecond and each memory copy as even single memory copy somewhere deeply in network stack can dramatically degrade performance.

Dare to think about storing traffic to disk even after seeing these numbers? Just don't.

Why programming language selection is crucial?

I know that any mention or recommendation of programming language may cause controversy but network traffic processing is a field where we cannot miss this topic.

To keep latency under control and be able to process incredible amount of data with minimum overhead I can personally look on following languages (lexicographic order):

- Assembler (only for optimisations of selected operations and critical functions)

- BPF, eBPF (well, you may be surprised but it's programming language)

- C

- C++

- Rust

- Add your favourite system programming language which allows manual management of memory and has solid support for multi-threading

You may have temptation to try following languages for network traffic processing but I strongly advice against:

- Perl

- Python

- Ruby

- Go

- Java

Reasons are simple: these languages do not provide easy way to work with memory or have issues with multi threading.

What's next?

In second part of article I'll describe in details all libraries I have experience with and then I'll provide conclusions.

Can I have answers right now?

We all live in times when our attention span is rapidly shrinking. I admire your ability to reach end of this article and I have reward for you.

If you're going to build application which works like standalone router/switch/firewall then I can recommend to look on DPDK and VPP (more solid and high level) for building your application.

If you would like to add some network optimisations or filtering for particular protocol within boundaries of same machine (like iptables) then look on XDP.

If you're looking to capture and transmit traffic on relatively slow speed between 1G and 10G with acceptable minimum packet loss then look on AF_PACKET v3 as it's very easy to work with. If you want to do same but with packet loss critical environment and on 40G-100G speeds then look towards AF_XDP.

Thank you!